Core Platform: DevIntel

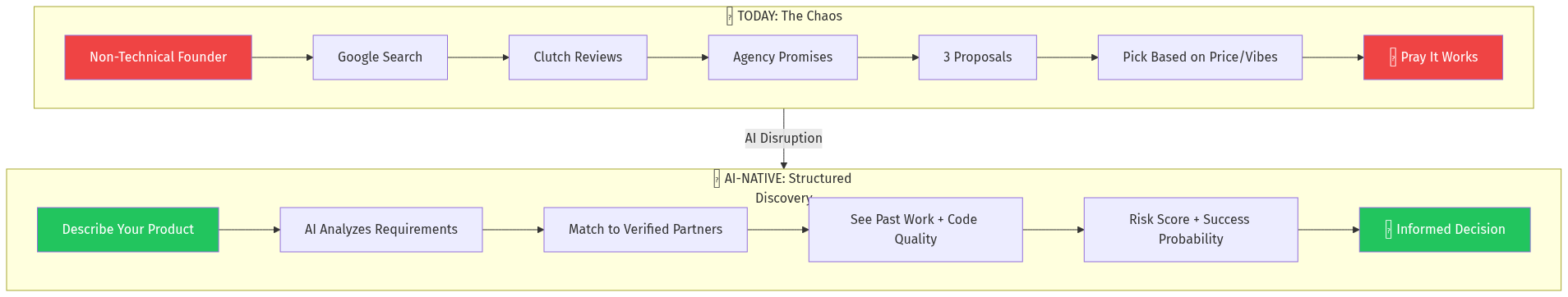

For Founders:

- Describe project in natural language

- AI generates technical requirements spec

- Receive shortlist of 5-7 best-fit agencies with success probability scores

- View code quality metrics, outcome history, communication patterns

- Access red flag reports and due diligence summaries

For Agencies (Supply Side):

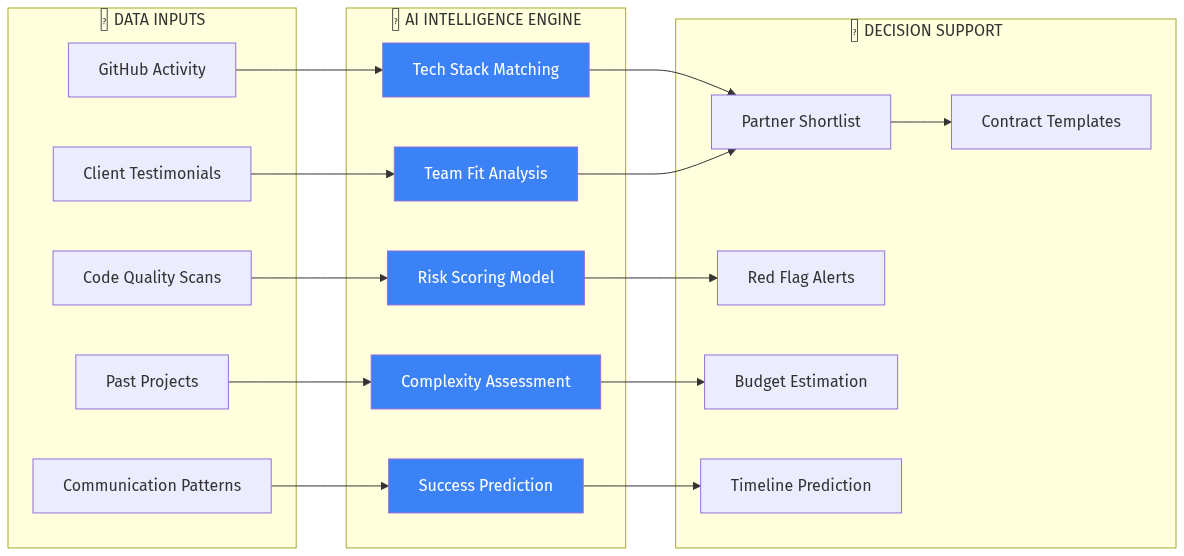

- Connect GitHub, Jira, communication tools for automated quality scoring

- Earn "Verified Outcomes" badges through tracked project results

- Access lead flow from qualified founders

- Receive AI-generated competitive positioning insights

Key Features

Intelligent Requirement Extraction

- Founder describes idea conversationally

- AI generates PRD-level specification

- Complexity score calculated automatically

- Tech stack recommendations provided

Agency Intelligence Scoring

- Code Quality Index (public repos, open source contributions)

- Communication Score (response patterns, documentation quality)

- Outcome History (tracked results from past projects)

- Team Stability Score (LinkedIn turnover analysis)

- Financial Health Indicator (growth signals, client concentration)

Predictive Matching Engine

- Project complexity → required capability mapping

- Success probability based on historical patterns

- Risk factors specific to this project-agency combination

Ongoing Project Intelligence

- Milestone tracking dashboards

- Communication health monitoring

- Early warning system for at-risk projects

- Mediation and escalation support

Mental Model: Second-Order Thinking

If this succeeds, what happens next?

First-order: Best agencies get more leads, poor agencies lose visibility

Second-order: Agencies invest in actual quality improvements to boost scores

Third-order: Industry-wide quality standards emerge, similar to restaurant health grades

Fourth-order: The platform becomes the credentialing authority for software development